Scribble-Go

Scribble-Go is a toolchain for safe distributed programming using role-parametric session types in Go. It extends Scribble, a toolchain for safe distributed programming using basic (non-role-parametric) session types in Java, with:

- support for role-parametricity;

- support for Go.

Abstract

An archive of the toolchain can be found here, 2.5GB. A version will appear in the ACM Digital Library as an artifact to the paper (doi:10.1145 / 3291614).

This document describes the artifact for “Distributed Programming using Role-Parametric Session Types in Go”, David Castro, Raymond Hu, Sung-Shik Jongmans, Nicholas Ng, and Nobuko Yoshida, published in the proceedings of POPL 2019.

The latest version of this document and materials can be found via the Scribble-Go project repo: see here.

We explain how to get the Scribble-Go toolchain running on the artifact VM (virtual machine), we give instructions on how to run our examples (demos and benchmarks), and we provide tutorials to get hands-on experience with the toolchain (including small exercises).

Table of contents

Overview

Introduction

Scribble-Go is a toolchain for safe distributed programming using role-parametric session types in Go. It extends Scribble, a toolchain for safe distributed programming using basic (non-role-parametric) session types in Java, with:

- support for role-parametricity;

- support for Go.

To summarise the content of the paper relative to Scribble-Go: the key contributions of Scribble-Go are described in Sect. 2; an example-driven overview of the Scribble-Go methodology is given in Sect. 3; the theory behind Scribble-Go is presented in Sect. 4; the implementation of Scribble-Go is presented in Sect. 5; benchmarks and additional examples are presented in Sect. 6.

Programming methodology

- The user writes a (role-parametric) global protocol specification in the Scribble-Go language (Sect. 5.1).

- The Scribble-Go toolchain checks the global protocol specification for well-formedness (Sect. 4.5), infers role variants (Sect. 4.3), computes the projections onto the inferred role variants (Sect. 4.4), translates the resulting local protocol specifications to CFSMs (Sect. 5.2), and finally generates APIs based on the CFSMs (Sect. 5.3).

- The user writes Go endpoint programs that use the APIs generated for the global protocol. The Scribble-Go toolchain guarantees that a statically well-typed endpoint will never perform a non-compliant I/O actions w.r.t. the run-time instantiation of the global protocol, up to premature session termination (Sects. 4.5 and 5.4).

Sect. 3 exemplifies this methodology in detail.

Aims of this artifact

The purpose of this artifact is to support the practical claims of the paper:

- (1) Practical metholodogy for distributed programming of role-parameteric protocols in Go based on the theory (Sect. 3 and Sect. 5).

- (2) Run-time overhead benchmarks (Sect. 6.1).

- (3) Applications (Sec 6.2).

Our artifact comprises four main components:

- (A) A VM

scribble-go-popl19-artifact.ovawith our software pre-installed and graphical UI scripts for running the software. - (B) This document, which explains:

- how to install and run the VM;

- how to browse and run the example demos and benchmark applications.

- (C) A supporting document

tutorials.html, which guides a hands-on experience of using our tools through a few tutorials. - (D) A package

benchmarks.zipthat allows to run our benchmarks outside the VM.

Claim (1) is supported by (B) that allows to browse and run all of the examples listed in Fig. 15 of the paper implemented using our tools, and (C) that offers a more hands-on feel for using our tools. We have prepared (B) and (C) to demonstrate all the core features of our Scribble-Go framework:

- Protocol specification: core language constructs for parameterisation/foreach/indexed roles

- Distribution: variant inference, family inference, well-formedness checking and projection

- Practical application: Go API generation and endpoint implementations, with transport-independence (shared memory and TCP supported)

To be more specific about (B), of the applications #9–21 (Fig. 15), the “fully implemented” demos are:

- #9

Pget– our main running example (Sect. 3).

The client allows (parallelised) fetching of pages from the Web, and the interoperable server supports (parallelised) handling of fetcher requests. - #14–16 Go applications from the Computer Language Benchmarks Game

repository

(CLBG),

revised using our tools, that:

- (i) count occurrences of molecule sequences in a DNA string (

k-nucleotide); - (ii) match regex patterns against a DNA string (

regex-redux); - (iii) computes the greatest eigenvalue of a matrix (

spectral-norm).

We also use these applications for the macrobenchmarks (Sect. 6.2). The application-level outputs of our revised versions have been checked against the original CLBG versions.

- (i) count occurrences of molecule sequences in a DNA string (

- #17 A pipeline-based parallel Fibonacci computation.

For the remaining applications, we use our tools to specify their global protocols and implement the skeleton endpoint programs to be executable in terms of their I/O (as stated in Sect. 6.2). However, we do not fill these skeletons with “local” computation code (that is orthogonal to the I/O structure), so for the most part they do not perform any real application-level computations (beyond token decision making).

For claim (2), the VM (A) (via the instructions in (B)) only

directly replicates our benchmarking methodology. Running the benchmarks

inside the VM is not suitable for replicating the actual results presented

in the paper (Sect. 6.1 and 6.2), that were conducted on the machine with the

stated specification. To obtain more meaningful results, we also provide a separate

benchmarks.zip (D) that allows to run the benchmark

applications as a standalone package outside the VM (requirements and

instructions included in the zip). Alternatively, the user

may try to export the benchmark applications from the VM to a local setup.

Note. By default, our artifact currently intends the user to cancel the

execution of the microbenchmark applications at some point after observing

them running. Otherwise, the microbenchmarks will eventually encounter

out-of-memory issues – we supply a

32-bit VM while our benchmarks were orginally designed for a 64-bit machine

with 16GB of RAM. We do not intend for the benchmarks to be run to

completion inside the VM (as the results are not meaningful) – please see the

FAQ below for pointers on bypassing this issue inside the VM, and see

the benchmarks.zip mentioned above for running the benchmarks outside the VM.

Claim (3) is directly attested by (B).

Scope

We are in the process of stabilising and merging our Scribble-Go prototype into the master branch of the central Scribble repository. To prepare this artifact for the above purposes, we have disabled the following features related to usability/cosmetics of the Scribble-Go toolchain in the artifact VM:

- Syntactic sugars in protocol specifications for

pairandpipe(we use the more generalforeach) - “

.-based” type generation (used in Sect. 3); instead, we use the “_"-based type generation (explained in Sect. 5). For instance, users should writem_F_1_Receive_Meta(&meta)instead ofm.F_1.Receive.Meta(&meta)(cf. line 17 in Fig. 5) to do this input action. - General compacting of names of generated packages, types, and functions. For

instance, users should write

M_F_1toK_not_1to1_Accept(i, ssi)instead ofM.F_2toK.Accept(i, ssi)(cf. line 10 in Fig.5) to do this accept action.

Note. The error messages reported by the toolchain need improvement. For instance, if a global protocol specification is badly-formed, the Scribble-Go toolchain may currently just print a full stack trace or a not very clear message.

Quick start

Installing/running the artifact VM

The following instructions have been tested using VirtualBox 5.2.18 on macOS {10.13, 10.14}, VirtualBox 5.2.22 on Debian 9, and VirtualBox 5.2.22 on Windows 10.

- Open VirtualBox

- File > Import Appliance

- Select

scribble-go-popl19-artifact.ova, then click “Continue”, then click “Import”

The artifact VM can now be started by selecting it and clicking “Start”.

To login: use the user name Ubuntu with an empty password.

Demos

Open “POPL'19 artifact demo” on the desktop to enter the demos menu (i.e., all systems listed in Fig. 15). Each demo will:

- show a (role-parametric) global protocol specification in the Scribble-Go language;

- run the Scribble-Go toolchain to generate APIs;

- show the interfaces of the generated APIs as an HTML page, as explained in more detail in Sect. “Exploring generated APIs";

- show endpoint programs in Go that use the generated APIs;

- run the endpoint programs, including the generated APIs, as a distributed system in a number of separate OS processes; at this point, one terminal will open for each endpoint.

As our main aim of these demos is to showcase the expressiveness of the Scribble-Go toolchain (and the underlying theory), we focused first and foremost on implementing the protocol/communication aspects of the applications listed in Fig. 15 (entries 9-21; entries 1-8 are not full applications anyway). However, to make the demos more attractive, we also extended the resulting “skeleton implementations” of some applications with real or mock computations. We emphasise that these extensions are essentially orthogonal to our actual aim. Specifically:

- Entries 9 (Pget, core running example) and 14, 15, 16 (CLBG, benchmark programs), and 17 (Fibonacci): The implemented endpoint programs perform I/O actions and real computations (e.g., when Pget is run, a url needs to be specified from which content is subsequently fetched and printed).

- Entries 10, 18, 21: The implemented endpoint programs perform I/O actions and mock computations (e.g., when Vickrey auction is run, the bidders bid hard-coded values).

- Entries 11, 12, 13, 19, 20: The implemented endpoint programs perform only I/O actions, without real or mock computations.

Benchmarks

Inside the VM

Open “POPL'19 artifact benchmarks” on the desktop to enter the benchmarks menu (i.e., all systems discussed in Sect. 6.1). The menu has four items:

- Microbenchmarks – Shows dialogs to explain how to run the microbenchmarks; performs a test run of a single iteration in a fresh terminal; performs a full run of all iterations in a fresh terminal (warning: this can take a long time to finish; press CTRL+C to manually abort). We note that the artifact VM ships with the same benchmark code that we used to produce the charts shown in Fig. 14. However, running the benchmarks on the artifact VM may give rise to different results than those plotted in Fig. 14.

- k-nucleotide – Shows dialogs to explain how to run the k-nucleotide benchmark of CLBG; performs a test run of a single iteration in two fresh terminals (one for the original CLBG version, and one for the Scribble-Go version); performs a full run of all iterations (warning: this can take a long time to finish; press CTRL+C to manually abort). We note that the artifact VM ships with almost the same benchmark code that we used to produce the charts shown in Fig. 14; the only difference is that we regenerate APIs every time the benchmarks are run.

- regex-redux – Similar to k-nucleotide

- spectral-norm – Similar to k-nucleotide

Outside the VM

We provide the following instructions to run the benchmarks outside the VM (using benchmarks.zip); these instructions can also be used to run the benchmarks manually inside the VM (but this is discouraged if the aim is to replicate our results, as explained above). The following instructions assume GOPATH is set (inside the VM, this is already done).

-

Macrobenchmarks

cd $GOPATH/src/github.com/nickng/scribble-go-examples/14_k-nucleotide; REPEAT=1 ./run_knuc # Run once ./run_knuc # Run 20 times (default, as paper)cd $GOPATH/src/github.com/nickng/scribble-go-examples/15_regex-redux; REPEAT=1 ./run_regex # Run once ./run_regex # Run 20 times (default, as paper)cd $GOPATH/src/github.com/nickng/scribble-go-examples/16_spectral-norm; REPEAT=1 ./run_spectral # Run once ./run_spectral # Run 20 times (default, as paper) -

Microbenchmarks

cd $GOPATH/src/github.com/nickng/scribble-go-examples/microbenchmarks \ go test -bench . -timeout 0 ./... # Run once go test -bench . -timeout 0 -count 40 ./... > microbenchmarks.time # Run 40 times (as paper)The last command redirects outputs of the benchmark to

microbenchmarks.timefile.

Benchmarks FAQ

Running the microbenchmarks inside the VM gives me out-of-memory problems.

The demonstration of our benchmark methodology in the VM is currently intended to be cancelled by the user at some point after observing them running. Please see the aims above.

The results from running the benchmarks inside the VM do not match those in the paper.

The VM itself replicates our benchmark methodology, but does not replicate our results. Please see the aims above.

I want to run the benchmarks inside the VM from the command line.

See the next question. (And also the next next question, for outside the VM.)

I want to run the microbenchmarks from start to finish inside the VM without any out-of-memory problems.

This can be achieved by reducing the number of repetitions/runs peformed by

the microbenchmarks. The following commands will run the microbenchmarks

with the duration of each run (we use the standard go test -bench

facility) set to 250ms, and for a single repetition per run. In the VM,

open a terminal and do:

cd ~/scribble-go-examples/microbenchmarks; \

go test -bench . -timeout 0 -benchtime 0.25s ./...

I want to replicate the results presented in the paper (by running the benchmarks in a replication of the actual benchmark environment stated in the paper, i.e., outside the VM).

Please see the benchmarks.zip package mentioned above.

Where is the benchmark output? What is the output format?

-

Macrobenchmarks.

The benchmark outputs (tab separated values) writes to standard output as it runs, and the results are also stored in the same directory of the source code. The format is “inputsize[TAB]parameter K[TAB]execution time”.

$benchmark_name/original.time

$benchmark_name/scribble.time

```

where `$benchmark_name = { 14_k-nucleotied, 15_regex-redux, 16_spectral-norm }`.

-

Microbenchmarks.

The benchmark outputs to the standard output as it runs. The results are not stored on file.The output format is described in the README in the

benchmark.zipmentioned above. We repeat the description below:The output format of the benchmark results (which is written to standard output) is described in details in the Go language documentation. Here is a sample output:

goarch: amd64 pkg: github.com/nickng/scribble-go-examples/microbenchmarks/alltoall BenchmarkManualSM/N=2-4 200000 727 ns/op BenchmarkManualSM/N=3-4 100000 1386 ns/op

>

> `pkg` is the package. There are three microbenchmark packages:

> `alltoall`, `gather`, and `scatter`. The output:

> ```plainterm

BenchmarkManualSM/N=2-4 200000 727 ns/op

> Means that the benchmark `ManualSM/N=2` (handwritten, shared memory,

> parameter `N` set to 2) was executed 200000 times at 727ns per run. If

> the benchmark name contains `Scribble` instead of `Manual`, it means

> the benchmark is using the Scribble-Go runtime. If the benchmark name

> contains `TCP` instead of `SM`, it means the benchmark is using TCP

> transport instead of shared memory transport.

Tutorials

Besides the VM itself and graphical execution scripts for the demos, the other

main component of our artifact are the hands-on tutorials in tutorials.html.

Please see that document for details.

Exploring generated APIs

Code organisation

Logically, Go code is organised in packages: each package consists of a number

of types, and each type consists of a number of functions. “Physically”,

every package is contained in a directory; every type and every function is

defined in a .go-file in that directory. A subpackage is a package that is

contained in a subdirectory of another package. For every (well-formed) global

protocol specification, the Scribble-Go toolchain generates one protocol

package and one or more role variant subpackages, as explained in more detail

below.

The Scribble-Go toolchain uses the

godoc tool to generate

HTML documentation for generated APIs (similar to Python’s Docstring and Java’s

Javadoc). In our experience, initially exploring the generated APIs through the

HTML documentation is an effective way to get a first impression of how the

generated APIs guide/force programmers towards communication-safety; if and when

a more detailed view is required, the code can be accessed directly through the

HTML documentation.

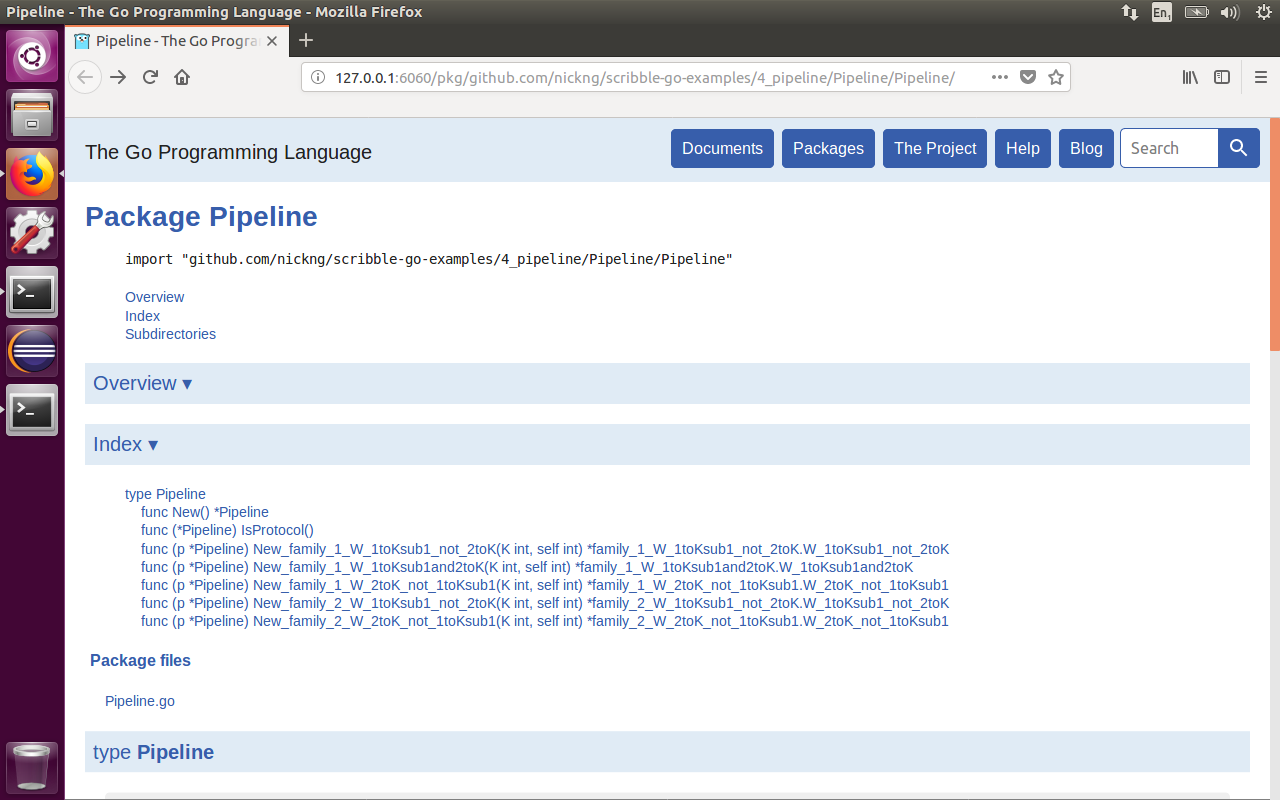

We now explain the structure of generated APIs in more detail, based on the HTML documentation for the Pipeline protocol. (Run the Pipeline demo as explained in Sect. “Demos” to open the HTML documentation.)

Protocol package

The following screenshots shows the page for the Pipeline protocol package:

We make the following initial remarks:

- The top of the page shows the full name of the protocol package, namely

github.com/nickng/scribble-go-examples/4_pipeline/Pipeline/Pipeline. Generally, the location of the code can be derived from such a full name as follows: if the full name isgithub.com/nickng/scribble-go-examples/<x>/<y>/<z>, the location of the code is~/go/src/github.com/nickng/scribble-go-examples/<x>/<y>/<z>. The code can also be accessed directly through the HTML documentation, as shortly explained. - A generated protocol package always consists of one file (e.g., Pipeline.go) with one type (e.g.,

Pipeline). - A protocol package has a number of subpackages (listed as subdirectories at the bottom of the page; not shown in the screenshots), namely one for each inferred family (e.g.,

family_1for the K>2 family inferred for the Pipeline protocol, andfamily_2for the K=2 family). These subpackages are “degenerate” (i.e., they do not define any types or functions), and they serve only as containers for role variant subpackages. In the special case that only one family is inferred, its role variant subpackages are placed directly in the protocol package (i.e., without an intermediatefamily_1subpackage).

Type Pipeline is the main type of the generated code; it forms the starting

point to participate in a Pipeline session. Specifically, function New creates

a new Pipeline-object (which represents a Pipeline session), while the

remaining functions create new endpoint objects for endpoint programs that

participate in the session.

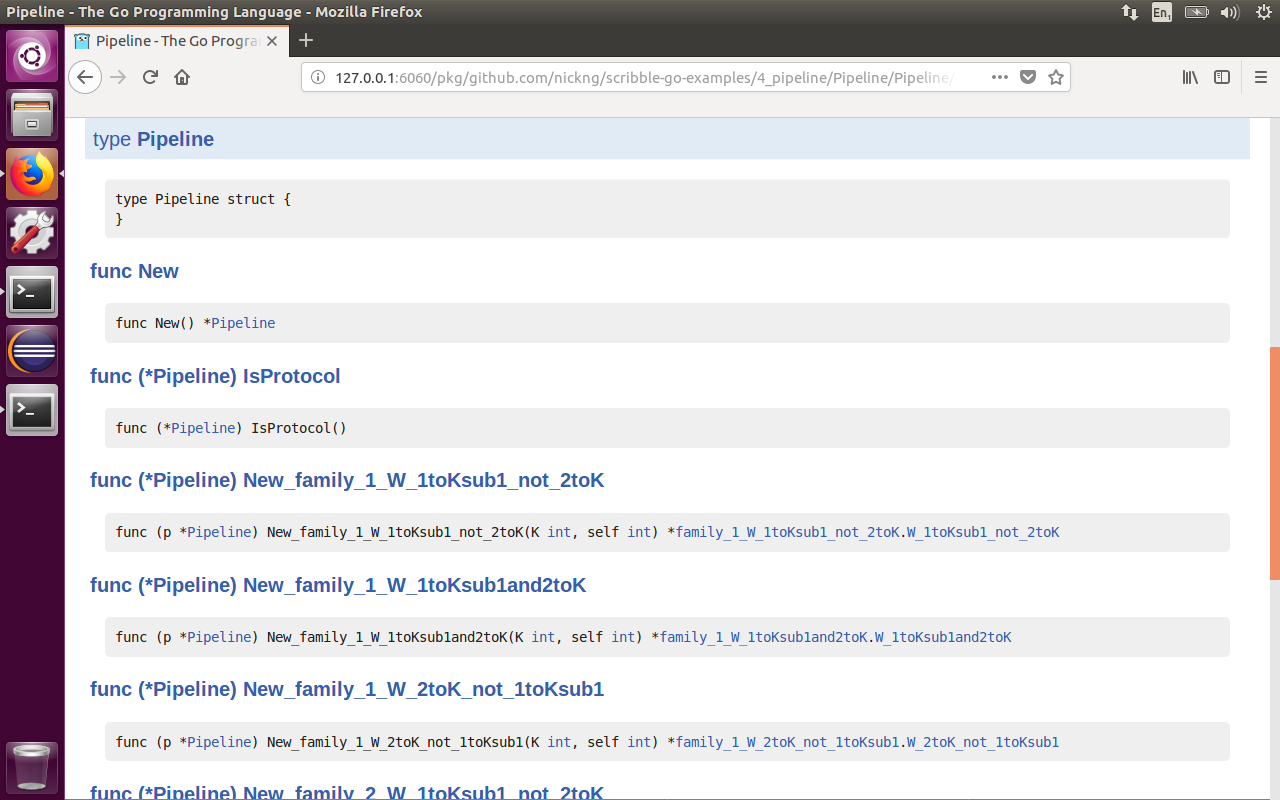

For instance, this function signature:

func (p *Pipeline) New_family_1_W_1toKsub1and2toK(K int, self int) *family_1_W_1toKsub1and2toK.W_1toKsub1and2toK

can be understood as follows:

func (p *Pipeline)– The receiver type of the function (i.e., the type on which the function is called).New_family_1_W_1toKsub1and2toK– The name of the function. It can be understood as follows:New_– Create a new endpoint…family_1_– …in a session of theK>2family…W_– …for a Worker…1toKsub1– …whose self-id is both in interval1..K-1…and2toK– …and in interval2..K(i.e., the self-id is in interval2..K-1, i.e., the Worker is a middleman)

(K int, self int)– The arguments of the function, namely: the total number of Workers and the self-id*family_1_W_1toKsub1and2toK.W_1toKsub1and2toK– The return type, namely typeW_1toKsub1and2toKof packagefamily_1_W_1toKsub1and2toK. This is the endpoint object type for middle Workers.

By clicking on the name of a function in the “type section” of the page (second screenshot above), the code that implements that function is opened. By clicking on the name of a package or type, the page for that package or type is opened.

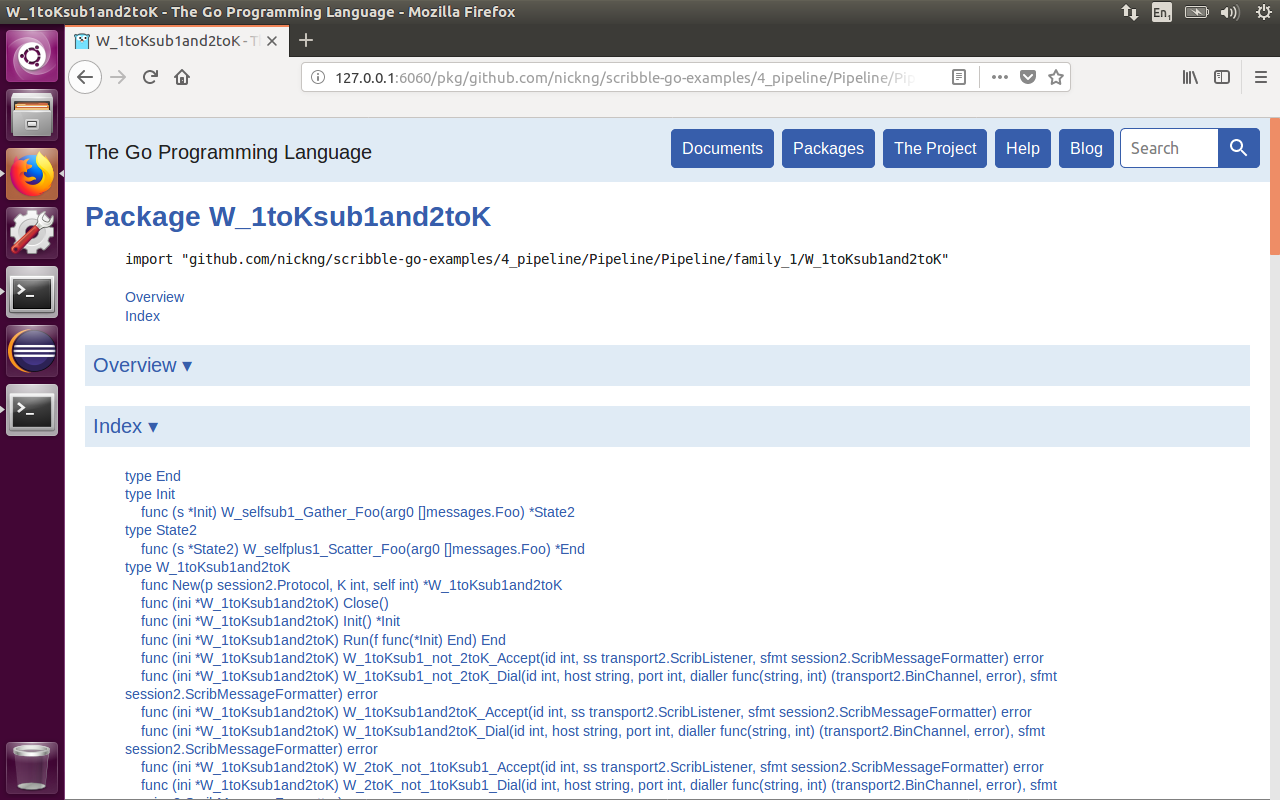

Role variant subpackages

The following screenshot shows the page for a role variant subpackage.

The general structure is the same as for a protocol package. However, there are more types:

W_1toKsub1and2toK– The main type (i.e., the endpoint object type; as explained above,Pipeline-objects create a new instance of this type each time functionNew_family_1_W_1toKsub1and2toKis called). The type has the following functions:..._Acceptand..._Dial– Initialise the underlying communication infrastructure.Init– Create the initial state channel for an endpoint program.Run– Run the endpoint program. The argument of this function is a function (i.e., the endpoint program) that consumes an initial state channel (i.e., anInit-object, explained below) and produces a final state channel (i.e., anEnd-object, explained below); the final state channel can be obtained only if the endpoint programs complies with the protocol.

Init– Type for initial state channels. It provides one function for every I/O action that an endpoint program is allowed to perform in the initial state of the session. For instance, in the initial state of the Pipeline protocol, a middle Worker is allowed only to receive a message from its predecessor, so accordingly, typeInithas only one function,W_selfsub1_Gather_Foo. This function’s signature can be understood as follows:func (s *Init)– The receiver type of the function (i.e., this function can be called only on an initial state channel).W_selfsub1_Gather_Foo– The name of the function. It can be understood as follows:W_– From all Workers…selfsub1_– …with ids betweenself-1andself-1(inclusive)…Gather_Foo– …gather message of typeFoo. The gather action is a many-to-one generalisation of the receive action. Similarly, we use a scatter action as a one-to-many generalisation of the send action. Here, the gather is used for the special one-to-one case (i.e., morally, a normal receive).

(arg0 *[]messages.Foo)– The argument of the function, namely (a pointer to) a data structure in which the received messages are stored.*State2– The return type of the function, namely the successor state channel.

State2– Type for successor state channels. The rationale is the same as for initial state channels (i.e., the type provides exactly one function for every I/O action that an endpoint program is allowed to do in the second state of the session). Crucially, the only proper way to obtain aState2-object is by calling functionW_selfsub1_Gather_Fooof typeInit. As a result, it is impossible for a middle Worker to send to its successor (which is allowed only in the second state), before it has received from its predecessor.End– Type for final state channels. It provides no functions, signifying that the end of the session has been reached.

It may be instructive to “click through the types” to “simulate” a session (especially for larger protocols), as demonstrated in the following video:

Runtime

The Scribble-Go runtime contains all low-level infrastructure code that is

used by the generated APIs. The code of the runtime is located at

~/go/src/github.com/rhu1/scribble-go-runtime/.

VM contents overview

Our software is installed in the VM by the following three (open source) git repository clones:

-

scribble: our prototype extension of Scribble for Go (currently implemented over the masterscribble-javacodebase). The location of the git clone in the VM is:

/home/ubuntu/scribble

It is cloned from: `https://github.com/scribble/scribble-java/tree/popl19-artifact`.

* `scribble-go-runtime`: the Scribble-Go Runtime is used by our generated APIs

to initialise session endpoints, perform connection actions and session I/O.

It supports shared memory (`shm`) and TCP (`tcp`) as message transports.

It is cloned in the VM at:

```plainterm

/home/ubuntu/scribble-go-runtime

It is symlinked (for the convenience of some import paths in the example Go

programs) to: /home/ubuntu/go/src/github.com/rhu1/scribble-go-runtime.

It is cloned from:

https://github.com/rhu1/scribble-go-runtime/tree/popl19-artifact.

scribble-go-examples: is our main examples and benchmarks repository. It is cloned in the VM at:

/home/ubuntu/scribble-go-examples

It is symlinked (for the convenience of some import paths in the example Go

programs) to:

/home/ubuntu/go/src/github.com/nickng/scribble-go-examples.

It is cloned from: https://github.com/nickng/scribble-go-examples.

The artifact was built by:

- Building a base VM using vagrant with base box

ubuntu/xenial32. - Installing the required software (Java, Maven, Go, Eclipse/Goclipse), and configuring the environment parameters.

- Cloning and building the above repositories.

- Implementing the UI scripts.

Our example applications (which include the macrobenchmarks, #14–16) and the

microbenchmarks are subdirectories in the scribble-go-examples directory

with the layout (or a close variation of):

|- ProtocolName.scr # The Scribble protocol

|- internal

|- protocolname

|- protocolname.go # The "core implementation" code of all roles

|- protocol-R # R is the role name

|- main.go # The "main" file of the role R, "go build" in this

# directory creates a binary "protocol-R" to run the role

|- ProtocolName

|- ... generated code ... # These do not exist before running

# "go generate" (i.e. using Scribble to generated the APIs)